Juyoung Lee

Ph.D. Candidate · KAIST UVR Lab

Ph.D. candidate working on one of the most deceptively hard problems in HCI: making XR interactions good enough for everyday life.

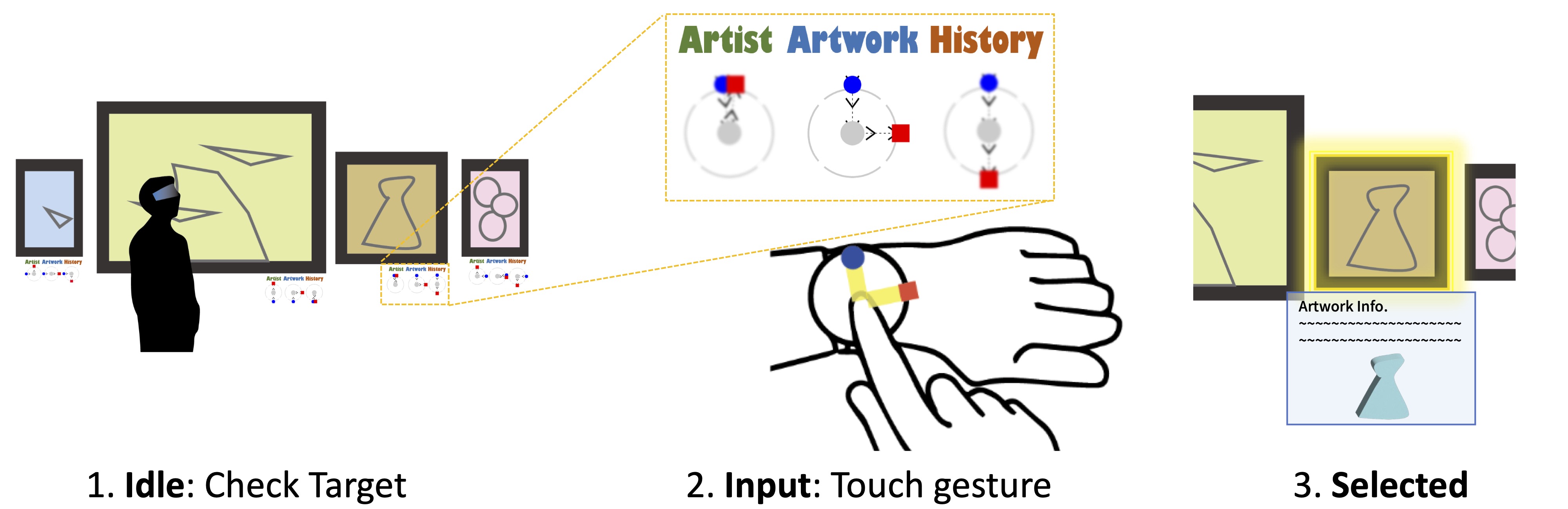

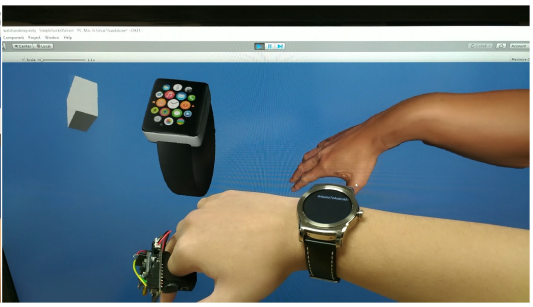

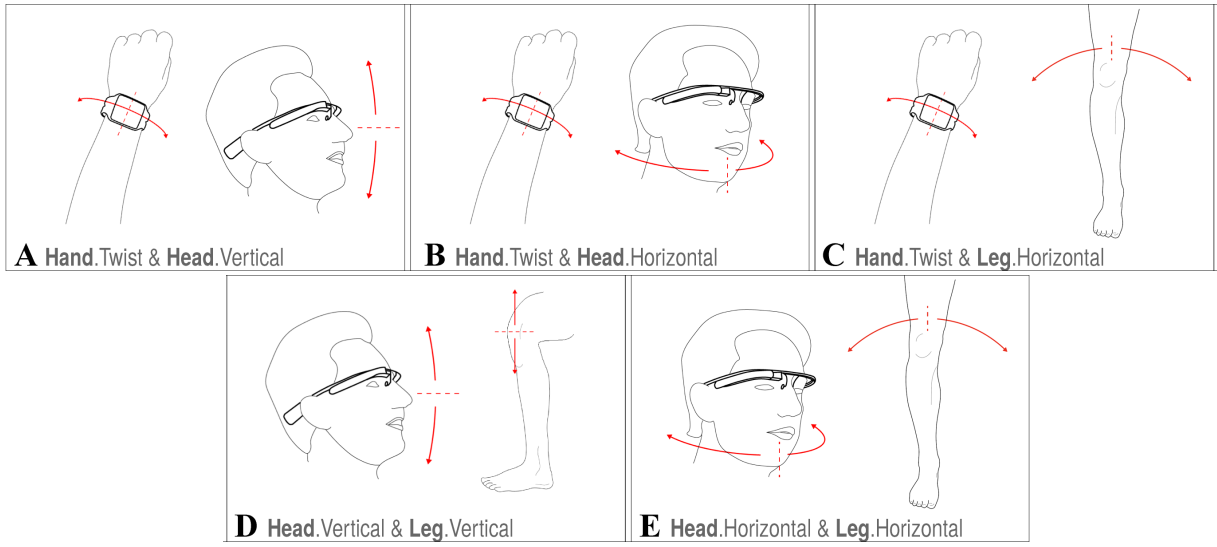

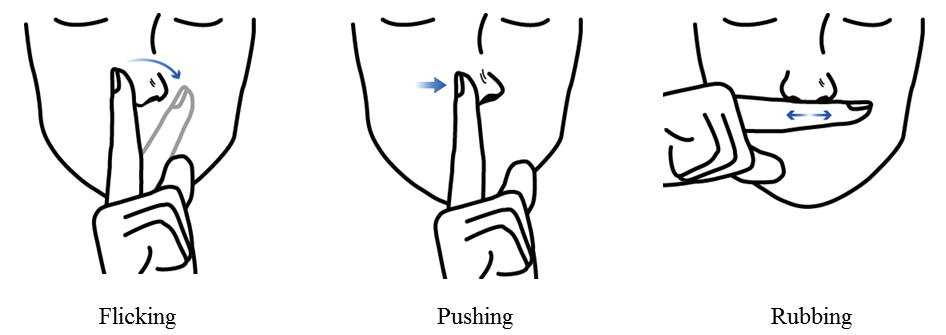

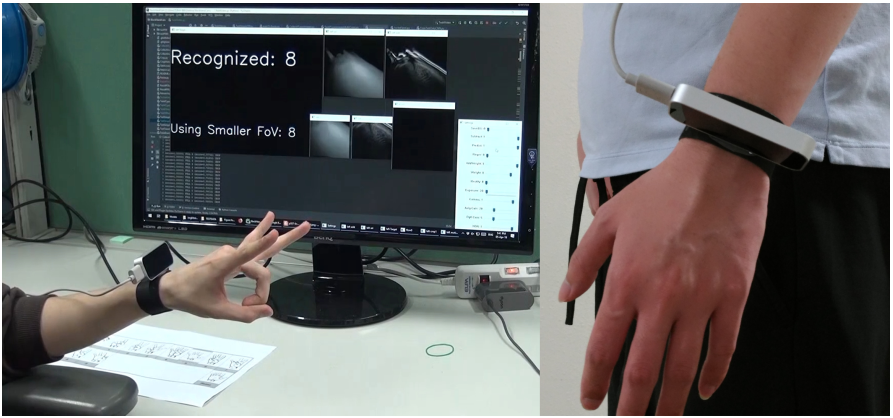

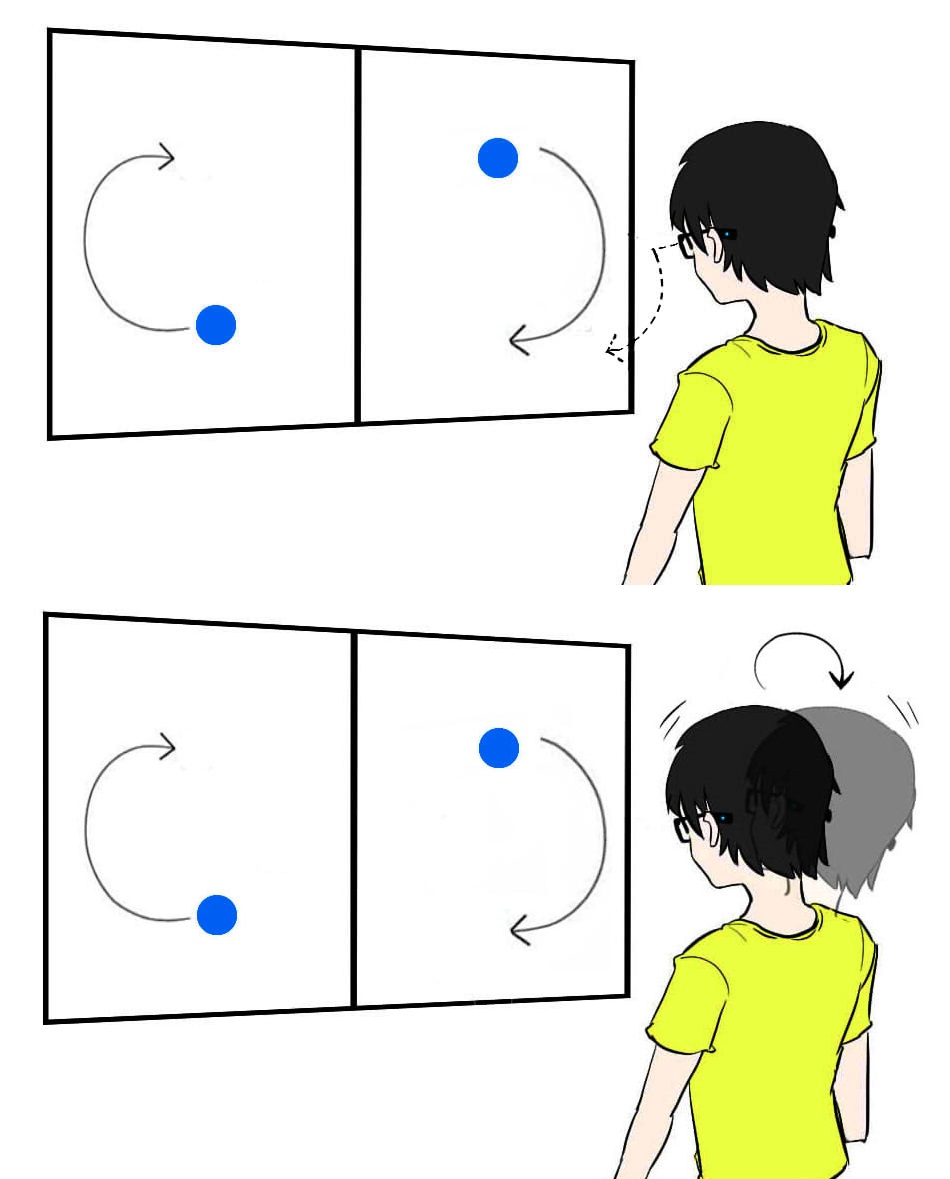

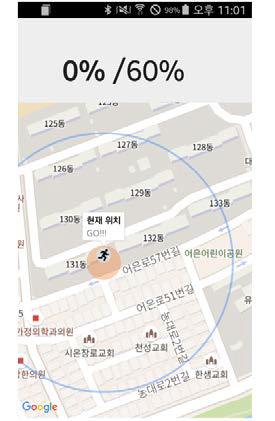

Real-world use demands more than clever demos. Interactions need to be quick, subtle, and robust against false positives, especially when you're wearing smartglasses for hours, not just five minutes in a lab study. That's the thread running through my work on gesture, EOG, touch, and force-based input.

Advised by Prof. Woontack Woo · Collaborators: Thad Starner, Kai Kunze, Hui-Shyong Yeo

- Ph.D. candidate, Graduate School of Culture Technology, KAIST (UVR Lab)